High-Capacity Drives and Performance Considerations

While working with a client the other day, we had an interesting conversation regarding footprint consolidation. As infrastructure has gotten smaller and faster, we are able to get more resources into a smaller footprint. At ivision, we see this use case on a regular basis: a client is moving their current data center into a colocation facility and consolidating the current footprint to maximize the power density and save rack space is crucial. Clients no longer have the luxury of spreading out equipment in sparsely populated racks!

SSD storage arrays have been a part of mainstream IT environments for 5+ years now and are largely responsible for the footprint consolidation trend, as these arrays offer a lot of performance and capacity in a small footprint! The increase in performance and capacity has meant complicated solution sizing has largely been pushed aside. Sure, performance considerations are always reviewed, however the days of building complex storage systems with multiple cabinets of disks to provide enough IOPS, at low latency, to support the applications are gone! And trust me, we are all thankful for that!

As SSD arrays have taken over the tier 0 and tier 1 workloads, traditional spinning disk arrays have evolved as well, with increases in both drive capacity and larger drive enclosures. My client has several 4TB NL-SAS drive shelves for their tier 2 and tier 3 storage array. The first enclosures contained 24 hard drives and the newer shelves hold 60 drives. These drives have performed well in their environment, and the storage admins have never been concerned with physical cabinet space and rack units. With a data center move to a colocation facility coming next year, we considered purchasing 10TB NL-SAS drives to increase capacity per rack unit. With a 250% capacity increase over the 4TB drives, it’s an easy decision, correct? Well maybe not.

If we go back to the previously mentioned “complicated solution sizing”, capacity considerations were almost always secondary to performance sizing. For example, the usable capacity requirement may have easily been achieved with four shelves of NL-SAS drives, but the workload required the number IOPS that a minimum eight shelves of NL-SAS drives could provide. It was not unheard of to build a storage system with much more capacity than was needed, simply to satisfy the performance requirement. IOPS are counted per drive and double the number of drives meant double the amount of IOPS at a lower latency!

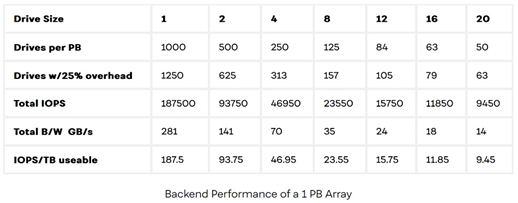

As “they” say, the more things change, the more they stay the same. This scenario helped me to realize (and remember) that although storage sizing has become much less complicated due to SSDs and large capacity drives, performance vs. capacity is still critical for the lower tier, HDD arrays. NL-SAS disks are currently available as large as 16TB capacity, which is an astonishing 1 PB of raw capacity in 4 rack units! The challenge with that much capacity, though, is the IOPS per useable TB metric has decreased as the drive sizes have grown.

The website www.blocksandfiles.com discussed this very topic last February in an article, and they provided the following table which shows how IOPS/TB have decreased as drive sizes increased.

My client would have been able to increase the capacity per rack unit substantially by moving from 4TB NL-SAS drives to 10TB NL-SAS drives, but as you can see, the available performance would have also been cut by over 50%! That was an unacceptable trade off to the business, even for lower tier workloads and user file shares.

The good news for our clients looking to consolidate their lower tiered data is that there are options! Both NetApp and Pure Storage have storage arrays utilizing QLC NVMe drives. These QLC drives provide high density storage at a competitive price point to current NL-SAS systems. NetApp’s FAS500f can provide 720TB raw capacity in 4 RU, at a price point that makes sense for lower tiered workloads. Pure Storage’s //C QLC line can provide almost 2 PB of raw capacity in 9 RU! Both are amazingly dense and provide NMVE performance, at a great price point!

ivision strives to find the right solutions for our clients. If you’re interested in learning more about high density storage options, please reach out and we’ll discuss potential solutions with you!